OpenAI-compatible API. Zero cloud dependency.

Run serious local AI on your Mac without rebuilding your stack.

AFM exposes Apple Foundation Models and Hugging Face MLX models through a fast Swift server, with streaming chat completions, tool calling, WebUI support, backend gateway proxying, and Vision OCR.

- Built in Swift for Apple Silicon and Metal

- Works with OpenAI SDK clients and coding agents

- No Python runtime required for the core server

Why AFM

One local runtime, four practical modes.

The codebase is organized around a shared OpenAI request and response contract, then routed into Foundation, MLX, Vision, or gateway-backed flows. That makes AFM useful as both a product and an infrastructure layer.

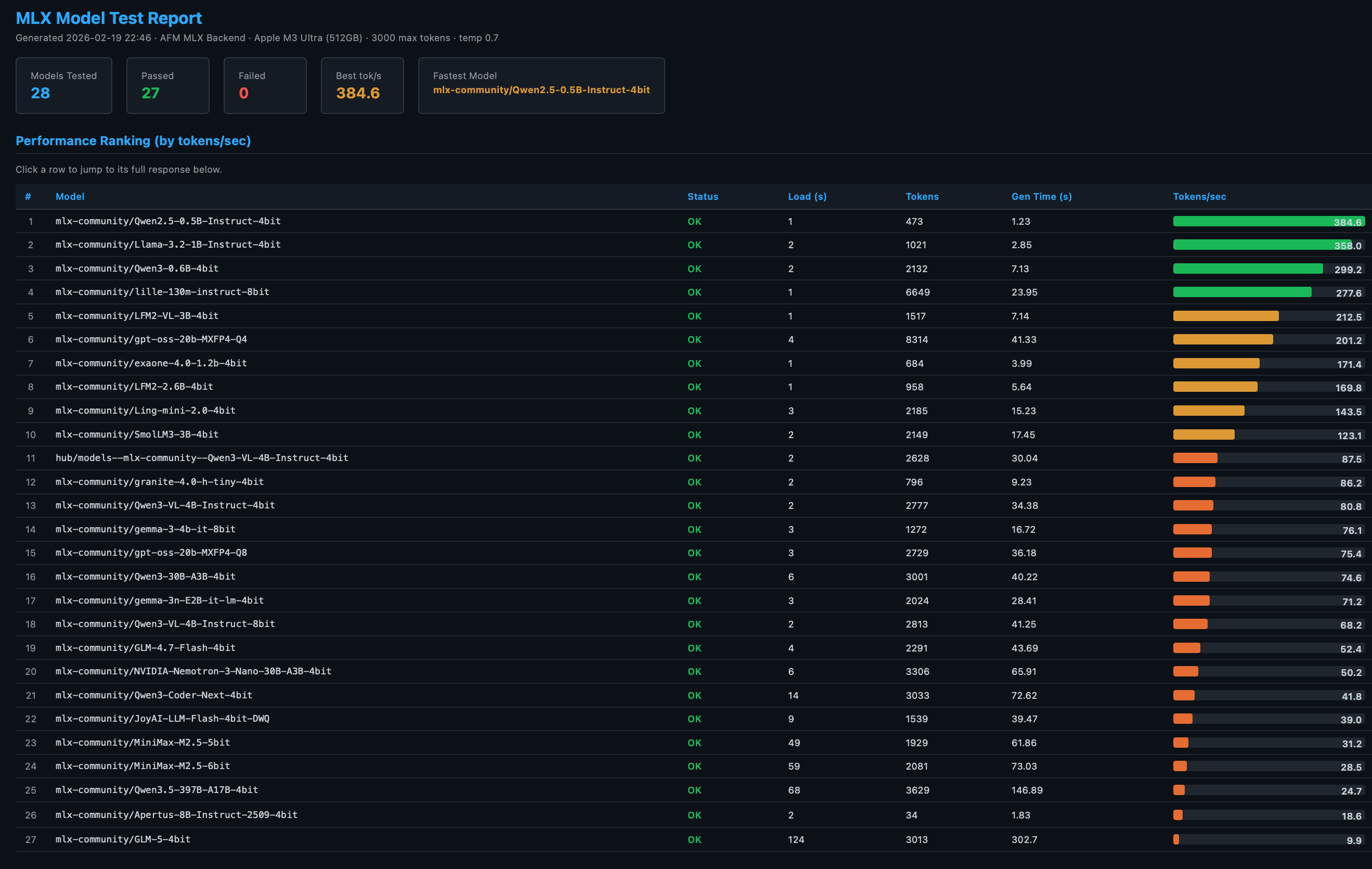

MLX inference

Run Hugging Face MLX models with streaming, top-k and min-p sampling, reasoning extraction, prompt prefix caching, KV-cache quantization, and tool call parsing.

- Server mode and single-prompt mode

- Works with chat, coding, and VLM models

- OpenAI-compatible `/v1/chat/completions`

Apple Foundation Models

Start AFM with no model download and expose Apple’s on-device model through the same API, with structured output support and WebUI access.

- Fast setup for native local inference

- Same client shape as MLX mode

- Good fit for app prototypes and internal tools

Gateway mode

Auto-discover local backends like Ollama, LM Studio, Jan, and compatible endpoints, then expose them through one AFM model listing and proxy surface.

- Unified `/v1/models` inventory

- Portable developer setup for teams

- Useful bridge for existing desktop tooling

Vision OCR

Extract text and tables from images and PDFs using Apple Vision from the same CLI family, useful for automation, document pipelines, and local capture workflows.

- Plain-text OCR output

- Table extraction as CSV

- Fits naturally beside LLM workflows

Demo

A product site, not a placeholder.

The front page can host real product motion, model reports, and usage examples. This implementation already includes space for video demos, screenshots, and technical proof points.

How To

Start from the mode you need.

These recipes mirror the actual command shapes exposed by the AFM CLI. Use them as launch points for docs, tutorials, and support content.

Architecture

Deep enough for practitioners.

AFM is not just a launcher script. The site should explain why the stack is credible: typed request models, consistent OpenAI responses, SSE streaming, Vapor routing, model services, backend discovery, and vendor patching for MLX support.

`main.swift` dispatches root, `mlx`, and `vision` modes

Root mode defaults to serve, while MLX and Vision subcommands stay isolated enough to avoid flag collisions.

`Server.swift` wires `/health`, `/v1/models`, and `/v1/chat/completions`

CORS, large payload handling, and WebUI compatibility stubs are already part of the server behavior.

Foundation and MLX controllers preserve one response schema

Streaming chunks, nullable content encoding, and tool call finish reasons are kept consistent for SDK compatibility.

Build, release, and patch flows are already scripted

The site can point users to stable, nightly, source builds, and generated reports without inventing a separate release process.

FAQ

Questions users will actually ask.

What does AFM replace?

AFM can replace a hosted OpenAI-compatible endpoint for local workflows, or sit in front of local backends so apps only have to target one API surface.

Do I need Python?

No for the core server and CLI. AFM is built in Swift. Python is only relevant for optional ecosystem tooling around it.

Can I use existing OpenAI clients and coding tools?

Yes. AFM exposes `GET /v1/models` and `POST /v1/chat/completions`, uses SSE for streaming, and follows OpenAI-style request and response shapes.

What are the real platform constraints?

AFM targets Apple Silicon Macs and current macOS/Xcode toolchains. MLX mode needs MLX-format models; gateway mode can bridge to other local engines.

Is this only a chat UI?

No. The embedded WebUI is a convenience layer. The main value is the local runtime, API compatibility, tool calling, OCR, and gateway behavior.

Download

Install stable, test nightly, or build from source.

This site is ready to send users toward the release flow that already exists in the repository and GitHub releases.

Stable

v0.9.6

For users who want the current packaged release.

Stable releaseNightly

v0.9.7-next

For users who want the current forward-looking branch with the latest fixes and reports.

Nightly buildSource

Swift package

For contributors and teams who want patch control, local builds, and repo-level customization.

Build from sourceHomebrew

brew install scouzi1966/afm/afm

pip

pip install macafm

Source

./Scripts/build-from-scratch.sh